My team uses Visual Studio Online for work item tracking and generally speaking it has pretty good baked-in reporting. I can see an overview of the current sprint, I can see capacity and I can see the burndown. One area that I’ve always felt it was missing, however, is a way to analyse the accuracy of our estimations.

We actually make pretty good estimations, in general terms: we rarely over-commit and it’s unusual for us to add anything significant to a sprint because we’ve flown through our original stories. This is based on a completely subjective guess at each person’s capacity and productivity which – over time – has given us a good overall figure that we know works for us.

But is that because our estimates are good, or because our bad estimates are fortuitously averaging out? Does our subjective capacity figure still work when we take some people out of the team and replace them with others?

This is an area where the reporting within VSO falls down and the limitation boils down to one issue: there is no way to (easily) get the original estimate for a task once you start changing the remaining work. So how can we get at this information?

Enter the API

I had seen a few articles on the integration options available for VSO but hadn’t really had a chance to look into it in detail until recently. The API is pretty extensive and you can run pretty much any query through the API that you can access through the UI, along with a bunch of useful team-related info. Unfortunately the API suffers the same limitation as the VSO portal, but we can work around it using a combination of a little effort and the Work Item History API.

Getting the Data

There is nothing particularly complicated about pulling the relevant data from VSO:

-

Get a list of sprints using the ClassificationNode API to access iterations

-

Use Work Item Query Language to build a dynamic query and get the results through the Query API. This gives us the IDs of each Task in the sprint

-

For each Task, use the Work Item History API to get a list of all updates

-

Use the update history to build up a picture of the initial state of each task Point 4 has a few caveats, however. The history API only records the fields that have changed in each revision so we don’t always get a complete picture of the Task from a single update. There are a few scenarios that need to be handled:

-

Task is created in the target sprint and has a time estimate assigned at the same time. This is then reduced during the sprint as the Task moves towards completion

-

Task is created in the target sprint but a time estimate is assigned at a later date before having time reduced as the sprint progresses

-

Task is created in another sprint or iteration with a time assigned, then moved to the target sprint at a later date

-

Task is created and worked on in another sprint, then is moved to the target sprint having been partially completed The simplest scenario (#1 above) would theoretically mean that we could take the earliest update record with the correct sprint. However, scenario 2 means that the first record in the correct sprint would have a time estimate of zero. Worse, because we only get *changes *from the API we wouldn’t have the correct sprint ID on the same revision as the new estimate: it wouldn’t have changed!

The issue with scenario 3 is similar to #2: when the Task is moved to the target sprint the time estimate isn’t changed so isn’t included in the revision.

A simplistic solution that I initially tried was to simply take the maximum historical time estimate for the task (with the assumption that time goes down as the sprint progresses, not up). Scenario 4 puts an end to this plan as the maximum time estimate could potentially be outside of the current sprint. If I move a task into a sprint with only half it’s work remaining, I don’t really want to see the other half as being completed in this sprint.

Calculating the Original Estimate: Solution

The solution that I eventually went with here was to iterate through every historical change to the work item and store the “current” sprint and remaining work as each change was made. That allows us to get the amount of remaining work at each update alongside the sprint in which it occurred; from this point, taking a maximum of the remaining work values gives us a good number for the original amount of work that we estimated.

It does rely on the assumption that Tasks estimations aren’t increased after they have started work (e.g. start at 2 hours, get 1 hour done then realise there’s more work so increase back to 2) but in this scenario we tend to create new tasks instead of adjusting existing ones (we did find more work, after all) which works for us.

Tying it all Together

Once I was able to get at the data it was relatively simple to wrap a reporting service around the implementation. I went with node & express for the server-side implementation with a sprinkling of angular on top for the client, but visualising the data wasn’t the challenge here!

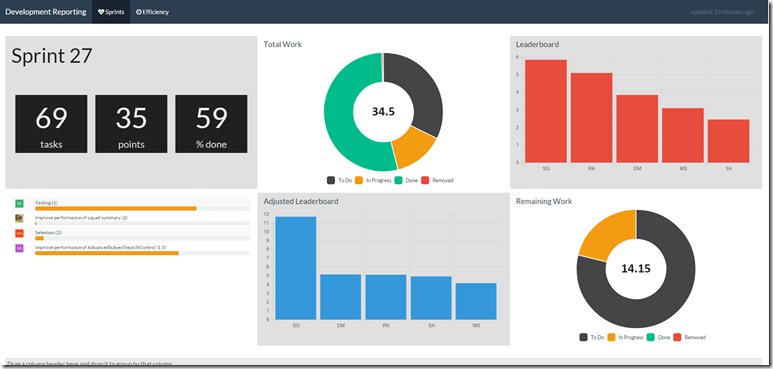

With this data available I can see a clear breakdown of how different developers affect the overall productivity of the team and can make decisions off the back of this. I have also seen that having a live dashboard displaying some of the key metrics acts as a bit of a motivator for the people who aren’t getting through the work they expect to, which can’t be a bad thing.

I currently have the following information displayed:

- Total remaining, completed and in-progress work based on our initial estimates

- %age completion of the work

- Absolute leaderboard; i.e. who is getting through the most work based on the estimates

- Adjusted leaderboard; i.e. who is getting through the most work compared to our existing subjective estimates

- Current tasks I hope that the VSO API eventually reaches a point that I can pull this information out without needing to write code, but it’s good to know I can get the data if I need it!